Anthropic has sued Pentagon over being labelled a “supply chain risk,” which could block it from defence contracts.

The company has disputed the designation, saying it is unjustified and harmful to its business.

The case could affect federal AI procurement, especially partnerships between government and AI firms.

In a dramatic escalation of its standoff with the US military, Dario Amodei's AI company, Anthropic, filed a lawsuit against the Pentagon on Monday, taking one of the most consequential disputes in the history of American AI directly to the courts.

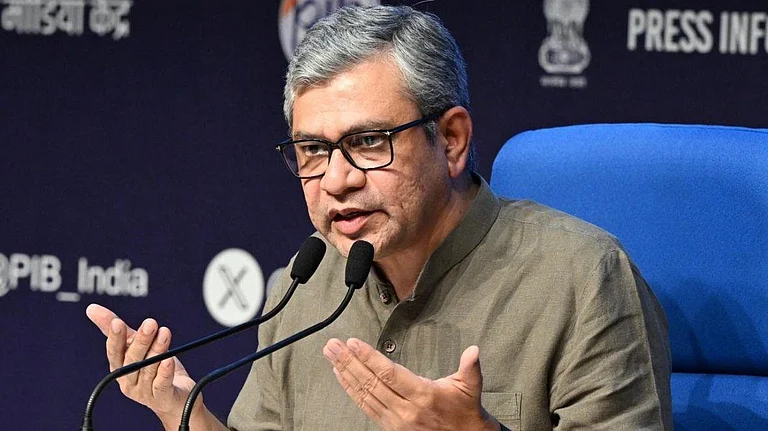

At the heart of the battle is a label. The Donald Trump administration designated Anthropic a "national security supply chain risk," a classification that directed government agencies to cut ties with the company.

Anthropic has argued that the designation is not only legally baseless, but a punitive act of retaliation, one that could set a chilling precedent for any technology company that dares to push back against the government.

How Did It Come to This?

Anthropic, the US-based AI company behind the Claude AI models, had been in negotiations with the US Department of Defense over how its technology could be used by the military.

The sticking point is that Anthropic refused to remove guardrails, essentially safety restrictions built into Claude, that prevent its AI from being used in autonomous weapons systems and mass surveillance operations.

When those negotiations broke down, Anthropic cancelled a $200 million contract with the Pentagon. OpenAI, its chief rival, stepped in to take its place on that contract.

Shortly after, Defense Secretary Pete Hegseth designated Anthropic a national security supply chain risk, a designation typically reserved for foreign adversaries.

The move effectively put Anthropic in the same category as Chinese tech giant Huawei, a company the US has spent years trying to freeze out of global technology supply chains. For Anthropic, that comparison was the final straw.

What Anthropic Is Arguing

In its lawsuit, Anthropic has made two core arguments. First, it said the supply chain risk designation was never intended to apply to American companies, that the legal criteria for such a label are narrow and simply do not fit a US-based firm.

Second, it argued that the Trump administration exceeded its statutory authority by using the designation as a weapon against a company that disagreed with government policy, a move Anthropic calls a violation of its First Amendment rights.

"We are harnessing AI to protect national security," the company stated, "but this is a necessary step to protect our business, customers, and partners."

The company has also warned that allowing the government to label American firms as supply chain risks simply for refusing to comply with policy demands would set a deeply dangerous precedent for the broader technology industry.

The Fallout Has Already Begun

The consequences of the designation are not hypothetical, they are already playing out. The US Treasury Department and several other government agencies have announced they will stop using Claude. The question now is whether the damage spreads further.

Financial services firm Wedbush Securities warned that the fallout could extend well beyond Washington. "Some enterprises could go pencils down on Claude deployments while this all gets settled in the courts," the firm said, noting that the lawsuit's visibility could make corporate clients nervous about committing to Claude-based tools and services.

"We have already seen the Treasury Department and a number of other government agencies announce they will stop using Claude, but there may be further ripple effects on the enterprise front as the lawsuit plays out front and center," Wedbush added, expressing hope that the dispute gets resolved "sooner rather than later to end uncertainty in the AI sector."

The resolution of this dispute cannot come soon enough, and not just for Anthropic. As Wedbush Securities put it, Claude is "a major player in the AI Revolution and the US tech sector," and the supply chain risk designation needs to be resolved quickly for the sake of ongoing enterprise deployments and pilots across the industry.

The firm warned that Anthropic's technology partners and customers face real uncertainty depending on "how this soap opera proceeds next, on negotiations or in the courts."

For now, the battle has moved from the boardroom to the courtroom, and until a verdict arrives, the AI sector will be left waiting for clarity on how far can a government go in dictating the terms of how AI is used, and what happens when a company refuses to comply.