OpenAI partners with the Pentagon to deploy AI models in classified networks

The deal establishes three red lines barring surveillance and autonomous weapons usage

OpenAI retains full discretion over its safety stack via cloud-only deployment architecture

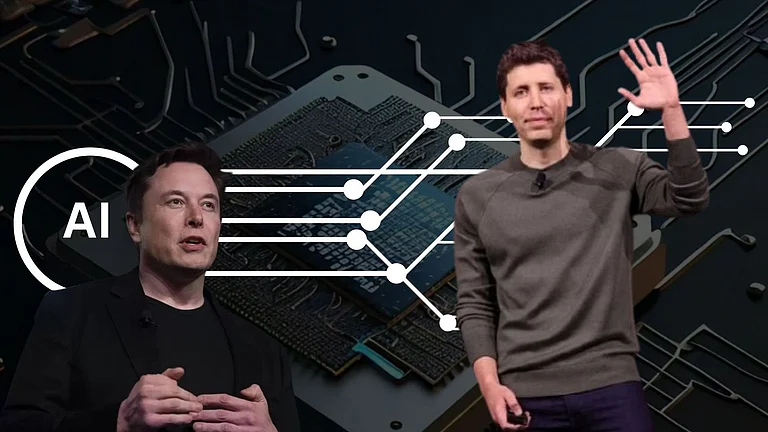

Days after a public standoff between Anthropic and the US Department of Defense, rival AI company OpenAI announced that it has reached an agreement with the Pentagon to deploy its AI models within the department’s classified network.

“We reached an agreement with the Pentagon to deploy advanced AI systems in classified environments, and we have requested that they make similar opportunities available to all AI companies,” OpenAI CEO Sam Altman said.

Altman stated that the agreement includes more safeguards than any previous arrangement for classified AI deployments, including Anthropic’s.

He outlined three primary red lines: no use of OpenAI technology for mass domestic surveillance; no use to direct autonomous weapons systems; and no use for high-stakes automated decision-making, such as social credit systems.

“In our agreement, we protect our red lines through a more expansive, multi-layered approach. We retain full discretion over our safety stack, we deploy via the cloud, cleared OpenAI personnel remain in the loop, and we have strong contractual protections. This is in addition to existing protections under US law,” Altman said.

OpenAI–Pentagon Agreement Details

Under the agreement, OpenAI will provide its cloud-only deployment architecture, including a company-managed safety stack aligned with its principles. The company said it is not providing “guardrails-off” or non-safety-trained models, nor is it deploying models on edge devices, which could potentially be used for autonomous lethal weapons.

The deployment architecture will allow independent verification that the red lines are not crossed, including through the running and updating of classifiers.

OpenAI will also have cleared, forward-deployed engineers supporting the government, with safety and alignment researchers involved in oversight.

The Department of Defense may use the AI system for lawful purposes consistent with applicable laws, operational requirements, and established safety and oversight protocols.

The system will not independently direct autonomous weapons in situations where law, regulation, or department policy requires human control. Nor will it assume other high-stakes decisions requiring approval by a human decision-maker. In accordance with DoD Directive 3000.09 (dated January 25, 2023), any use of AI in autonomous or semi-autonomous systems must undergo rigorous verification, validation, and testing before deployment.

Why Anthropic Did not Reach Similar Deal

In a FAQ section, OpenAI addressed the question of why it was able to reach an agreement when Anthropic was not.

“Based on what we know, we believe our contract provides stronger guarantees and more responsible safeguards than earlier agreements, including Anthropic’s original contract. We believe our red lines are more enforceable because deployment is limited to the cloud, our safety stack remains operational as designed, and cleared OpenAI personnel remain involved,” the company said.

It added that it does not know why Anthropic was unable to reach a similar agreement and expressed hope that other AI labs would consider comparable arrangements in the future.