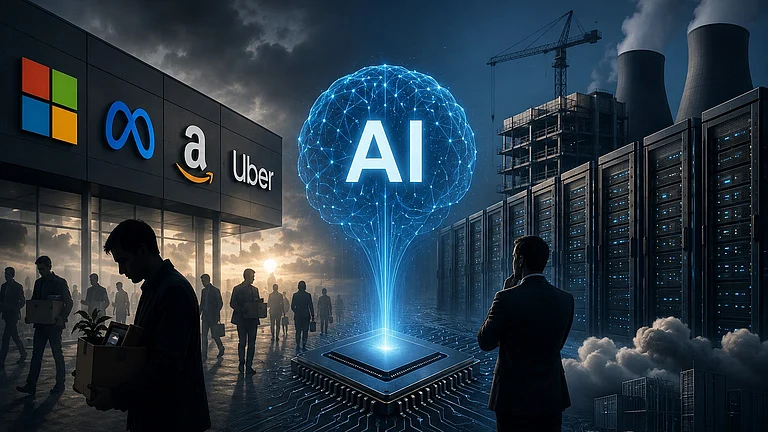

Information Technology Industry Council warns Pentagon over proposed action against Anthropic.

Supply-chain risk label could bar Anthropic from US defence contractors.

Tech leaders including Amazon and OpenAI express concern.

Dispute centres on AI military use restrictions versus Pentagon’s “all-lawful use” policy.

Some of the biggest names in the American technology industry have broken their silence over the growing confrontation between Anthropic and the US Department of Defence, now known as Department of War, with a prominent industry body expressing alarm at the Pentagon's reported move to designate the AI company a supply-chain risk — a label that could effectively bar it from working with military contractors across the United States.

According to a Reuters report, The Information Technology Industry Council, whose membership includes Amazon, Nvidia, Apple and OpenAI, wrote to the Pentagon on Wednesday warning that it was troubled by reports of the supply-chain risk designation being considered in response to what the letter described as a procurement dispute.

Anthropic is not named in the letter directly, but the subject of the concern is not in doubt.

Anthropic's chief executive Dario Amodei has held direct conversations with Amazon CEO Andy Jassy — Amazon being one of Anthropic's most significant backers — as well as with other major investors and partners, people familiar with the matter told Reuters.

Venture capital firms Lightspeed and Iconiq have been in contact with Anthropic's leadership, and are also understood to be canvassing other investors about possible ways to resolve the situation. Some in the investment community have gone further still, reaching out to contacts within the Trump administration in an effort to ease the tension before it hardens into something more permanent, the report said.

The central concern driving all of this activity is straightforward: avoiding an outright ban on Anthropic's AI systems across Pentagon contractors.

The Conflict

The conflict between Anthropic and the Department of War has been simmering for months. At its core is a fundamental disagreement about the terms on which AI companies should allow their technology to be used by the military.

Designed with safety in mind, the AI lab's Claude has been touted as one of the most advanced and responsible AI tools on the market. In mid-2025, Anthropic won a significant contract — around US $200mn — to integrate Claude into Pentagon systems, including classified networks.

The company’s internal policies prohibit using Claude for certain military applications: most notably, fully autonomous weapons that can select and engage targets without humans in the loop, and mass domestic surveillance of citizens.

The Pentagon pressed AI developers to abandon specific restrictions on usage in favour of a broad "all-lawful use" standard. Anthropic has declined, maintaining firm prohibitions on its Claude AI being used to power autonomous weapons systems or to enable mass domestic surveillance. The company was the first among its peers to work with classified material through a supply arrangement channelled via Amazon's cloud infrastructure.