AI platforms are monetising journalism without paying for it, hollowing out the news industry’s revenue base

Real-time news is central to AI’s value proposition, without it, AI tools revert to outdated, limited systems

Governments must mandate licensing, transparency, and regulatory oversight to prevent the erosion of independent journalism

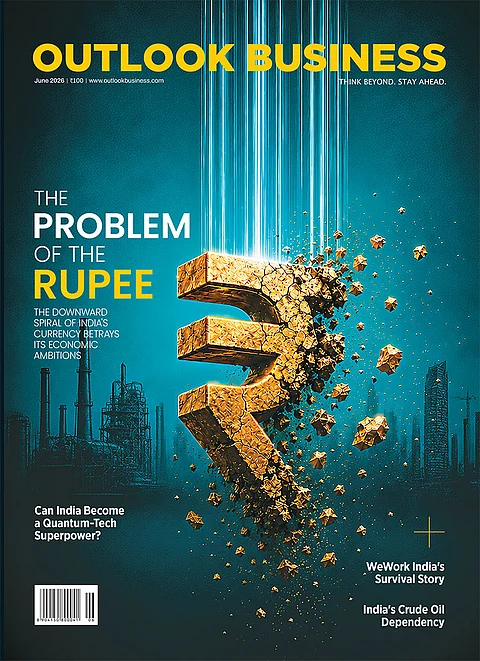

There is a war on right now, and trillions of dollars hang in the balance. Unlike the trade disputes that make front pages, or the tariff standoffs that dominate economic commentary, this one is invisible to most people, even as it shapes the very information they consume. At its heart, it is a battle over who pays for the facts the world runs on.

Every morning, governments make policy decisions, central banks weigh interventions, investors rebalance portfolios, traders execute billion-dollar positions, and business leaders chart strategic pivots, all on the basis of real-time, verified, credible news. The intelligence that powers these decisions does not emerge spontaneously. It is the product of reporters who spend years building sources, editors who enforce standards, legal teams that fight for access, and institutions that stake their reputations on every published word. That machinery is expensive. And it needs to be paid for. Imagine forex, equity and commodity traders relying on unverified AI-generated analysis or Instagram reels to make investment decisions amid an ongoing war in the Middle East. It would not only distort price discovery and undermine market efficiency, but also heighten systemic risk for a wide range of stakeholders across the financial ecosystem.

The first wave of disruption came with Google. When the search giant began aggregating headlines and snippets, traditional publishers felt the sting of a peculiar paradox: their content was driving traffic and advertising revenue to Google's platform, while Google funnelled only a fraction of that value back to the original producers. Publishers complained, lobbied, litigated. Some struck content-licensing deals. The argument was heated, but at least the logic was not entirely broken. A reader who clicked through to a news story was, however briefly, a visitor to the publisher's turf. Traffic could be monetised. Audiences could be retained. The equation was imperfect, but it was not zero-sum.

With the emergence of generative AI, even that imperfect equation has collapsed.

Today, AI platforms, including ChatGPT, Perplexity, Google Gemini, and others, routinely ingest, synthesise, and deliver news content directly to users as confident, polished responses. The user gets the information. The AI company gets the engagement. The news publisher gets nothing - not a click, not an impression, not a cent. The pipe that once carried readers from aggregator to source has been sealed shut. In the AI era, the destination is the aggregator itself.

Consider the analogy of pharmaceutical innovation. When a drug company invests years and billions of dollars developing a novel molecule, the law grants it a period of patent protection, typically 20 years, during which no competitor may replicate and sell that compound without a licence and payment. The rationale is simple and elegant: without the promise of protected returns, no rational actor would take the enormous risk of innovation in the first place. Mankind would be the poorer for it. The patent system is not a favour to corporations; it is an infrastructure for sustaining the engine of discovery.

Now imagine a world where, from the day of a drug's approval, any manufacturer anywhere in the world was free to copy and distribute it without paying the inventor. Drug discovery would become financially unsustainable almost overnight. Research pipelines would dry up. Diseases would go untreated. The very logic that makes pharmaceutical innovation possible, the expectation of reward proportionate to risk, would be annihilated.

This is precisely the world that news publishers inhabit today. News has no equivalent patent protection. There is no exclusivity window during which a publisher can recoup the costs of a scoop before competitors, now including AI systems, freely replicate and redistribute it. A financial newspaper that spends months investigating accounting irregularities at a major corporation cannot legally prevent an AI chatbot from absorbing, summarising, and serving that investigation to millions of users the moment it is published, without attribution, without payment, and without a single reader ever visiting the original source.

New York Times publisher A.G. Sulzberger, speaking at Stanford's Graduate School of Business in January 2026, posed the question with memorable directness: if OpenAI cannot acquire Nvidia chips for free to train its AI models, why should it be entitled to free data from news publishers to do the same? The logic is indisputable. Both chips and journalistic content are inputs to the same production process. Both require substantial investment to produce. Both generate enormous value when incorporated into AI systems. One is paid for; the other is not.

What is happening today is a plundering of goods from news factories. This is systematic, large-scale, and treated by the technology industry as entirely unremarkable. It is as if FMCG distributors were legally permitted to walk into manufacturing plants, load their trucks, and sell the goods downstream while the manufacturer received nothing. It is banal in its regularity and criminal in its logic. And it is dismantling the financial architecture on which credible journalism rests.

AI Is Nothing Without News

When Sam Altman was briefly removed from OpenAI in November 2023, hundreds of the company's employees threatened to resign, flooding social media with a declaration that became a rallying cry: OpenAI is nothing without its people. The sentiment was powerful enough to force a reversal within days. The lesson was clear - the value of a technology company lies not just in its algorithms or compute, but in the human intelligence that animates them.

A parallel truth, less frequently stated but equally fundamental, applies to AI's relationship with journalism: for most users, AI is nothing without news.

Pause and consider how people actually use AI chatbots today. A fund manager asks for a summary of the latest central bank commentary before a morning meeting. A logistics executive queries AI for the latest shipping cost data and trade policy updates before presenting to the board. A retail investor asks whether a company's earnings announcement changes the outlook for its stock. A corporate communications professional seeks to understand how a competitor's recent product launch has been covered by the media. In each of these cases, and in countless others that play out millions of times a day, the utility of the AI system depends entirely on its access to fresh, verified, real-world journalism.

Disable AI systems from scraping credible news published in the past 30 days, and the consequences would be immediate and severe. The polished, confident AI responses that users have come to rely on for business decisions, market analysis, and current affairs would dissolve into hedged uncertainty and outdated context. The tool that has transformed productivity for knowledge workers around the world would revert to something far less useful, a clever but temporally stranded system unable to engage meaningfully with the present.

This is not speculative. When ChatGPT launched in late 2022, it was remarkable for its fluency and breadth, but its practical utility for most professionals was severely limited by a single caveat: it had been trained on data only up to a certain date. For anything requiring recent context, including evolving market conditions, the latest policy announcements, breaking corporate developments, the system was functionally blind. Users joked that it was a brilliant conversationalist who had been living in a bunker for two years. It was a novelty, a toy for coders and curious minds, not the decision-support tool it has since become. What changed was the integration of real-time information - the live feed of news and current events that transformed ChatGPT from a parlour trick into a professional instrument.

The AI industry understands this better than it lets on. The reason Perplexity markets itself as an 'answer engine that searches the web in real time' is precisely because static knowledge is insufficient for the use cases that justify professional subscriptions. The reason Google's Gemini integrates live search results is not incidental, but because it is the feature. Real-time, reliable information is not merely one component of AI's value proposition; for the majority of its high-value applications, it is the value proposition.

There is a deep irony, then, in the AI industry's resistance to compensating news publishers. By undermining the financial sustainability of journalism, AI companies are systematically eroding the very resource on which their most compelling applications depend. It is the equivalent of a factory contaminating the aquifer from which it draws its own water supply. The reckoning, when it comes, will be shared.

The Hypocrisy of Regulation

When it comes to regulation, there is a precedent worth examining that should apply to this case.

For decades, private FM radio operators in India have been prohibited from broadcasting independent news bulletins. Their licences restrict them to airing only news content sourced from All India Radio, the state broadcaster. This was not an accident of regulatory design; it was a deliberate policy choice rooted in a specific concern: that private broadcasters, driven by commercial incentives and operating without the institutional accountability frameworks of established newsrooms, posed too great a risk of amplifying misinformation at scale. Radio, with its unique capacity to reach mass audiences instantaneously, including those who are not literate, was considered too powerful a vector for unverified information.

The regulatory rationale acknowledged that the dissemination of information is not a neutral act. Context, verification, and accountability are not optional add-ons to journalism. Instead, they are its functional core. A broadcast channel that merely pipes content to audiences, without the editorial checks that validate and contextualise that content, is not a news service. It is an amplifier.

The parallels with today's AI platforms are striking, and the concerns are if anything more acute. AI systems hallucinate. They generate confident-sounding falsehoods and attribute quotes that were never made. When AI is used to answer questions about current events, financial markets, or geopolitical developments, the gap between what it presents and what is actually true can be consequential in ways that extend well beyond individual inconvenience.

Traditionally, the Indian government has always sought to retain strict control over the producers and disseminators of news. This is the reason why foreign ownership is strictly controlled in print and broadcast media. However, AI companies are not based in India, and this creates regulatory asymmetry.

The Indian private radio precedent suggests a regulatory toolkit worth revisiting: tiered access rights based on demonstrated editorial accountability, mandatory disclosure requirements for AI-generated news content, and the option, in the most serious cases, of restricting AI systems from disseminating news in categories where accuracy is most critical and the consequences of error most severe.

The Time for Govt to Act

When the COVID-19 pandemic struck, governments around the world faced the necessity of designating essential services, essentially activities so fundamental to social functioning that they had to be maintained regardless of economic disruption. In India, as in most democracies, journalism was on that list. Reporters were permitted to move, newsrooms were kept operational, and the flow of verified public information was treated as a non-negotiable public good. The classification was not sentimental. It reflected a hard-headed recognition that democratic societies cannot function without institutions capable of producing credible, independent information.

That recognition has not disappeared. But the financial conditions that make such institutions viable are under unprecedented strain, and policymakers have been slow to respond with commensurate urgency.

In the United Kingdom, something important has just begun. The BBC, the Financial Times, The Guardian, Sky News, and The Telegraph, five of the most significant news organisations in the English-speaking world, have jointly formed SPUR: the Standards for Publisher Usage Rights coalition. In their founding open letter, the five institutions describe a world in which their reporting, archives, and original content have become foundational training material for AI systems, scraped, copied and reused with no common standards to enable permission or payment, weakening the economic model that supports journalism. Their goal is not to stop AI from using journalism, but to ensure that it does so through rights-cleared, accountable channels that provide fair compensation.

In the United States, the New York Times has sued both OpenAI and Perplexity for copyright infringement. Other publishers are watching closely. The legal terrain is contested and the outcomes uncertain, but the direction of travel is clear: the free-rider era of AI content acquisition is ending, whether by commercial negotiation, legal ruling, or regulatory mandate.

For India, the stakes are distinctly high and the urgency correspondingly greater. The Indian news media ecosystem, already under financial pressure from digital disruption, is particularly exposed to the AI content drain, both because it lacks the scale to negotiate directly with global AI platforms and because it operates in a regulatory environment that has not yet grappled seriously with these dynamics.

There is a broader concept that has acquired new currency in recent years: technological sovereignty. Governments across the political spectrum have come to understand that dependence on foreign technology, including for semiconductors, for cloud infrastructure, and for communications, creates vulnerabilities that are as strategic as they are commercial. The same logic applies to information. A media ecosystem that is financially hollowed out by foreign AI platforms does not merely threaten the business interests of news companies. It threatens the integrity of the information environment on which democratic governance, judicial accountability, and market function depend.

Western institutions will always protect their own interests first. This is not cynicism; it is geopolitical realism. Whether a Democrat or a Republican sits in the Oval Office, Washington's instinct will be to create conditions in which American technology companies can capture maximum value from global information flows. The platforms that are today ingesting Indian journalism for free will, if unchecked, continue to do so until there is no Indian journalism left capable of feeding them.

The policy ask is not radical. It does not require governments to nationalise news, or to break up technology companies, or to wall off the internet. It requires, at minimum, three things: first, that AI systems operating in national markets be required to license news content on fair, transparent, and commercially reasonable terms; second, that mandatory provenance disclosure be attached to AI-generated responses that draw on journalistic sources; and third, that regulatory bodies develop active frameworks, analogous to the ones India already has for broadcasting, to govern the dissemination of news-related content by AI systems in high-stakes domains.

No democratic society can function sustainably without institutions capable of producing credible, independent, real-time information. No AI system can deliver its highest-value applications without those same institutions continuing to function. The interests, for once, are convergent. What is needed is the political will to act on them, before the factories have been emptied and there is nothing left to protect.