Dear Reader,

The Inference | When AI Workers Fail to Deliver; Hinglish Vinglish, And More

Artificial intelligence is no longer just about breakthroughs in labs or pumping billions of dollars into data centres — it’s in our hospitals, courtrooms, classrooms, and on the battlefield. At Outlook Business, we believe that India needs a sharp, nuanced, and people-first lens on this transformation.

Artificial intelligence is no longer just about breakthroughs in labs or pumping billions of dollars into data centres — it’s in our hospitals, courtrooms, classrooms, and on the battlefield. At Outlook Business, we believe that India needs a sharp, nuanced, and people-first lens on this transformation.

The Inference is our attempt to make sense of a world being rewritten by AI. In this newsletter, we bring you frontline narratives, boardroom insights, and data you can trust. Whether you’re an investor, founder, policymaker, or just curious — this is where the signal cuts through the noise.

In this edition of the newsletter:

When AI workers fail to deliver

Cracking the Hinglish Vinglish puzzle

India’s AI stack missing key pieces

Going rogue for crypto

Humans in the Loop

When AI workers fail to deliver

On a rainy night in Delhi, Shashwat ordered a cheesecake through Zomato. The order got delayed due to the inclement weather. He half-expected the delay while ordering, but the sequence of events that followed left a bitter taste.

The app showed that the delivery partner had picked up the order. But he stopped moving somewhere along the way. When Shashwat tried chatting with the customer support, he was bombarded with repeated messages of “the order has been picked up” and “we are monitoring your order”. Every message eventually led to the same conclusion.

After 20 minutes of exasperation, he got through to a human customer service agent who finally understood the issue. “The chatbot kept saying cancelling will lead to a penalty because the order was picked up. The executive saw that the delivery partner wasn’t moving and understood it immediately,” he says.

Countless such stories are playing out every day across consumer facing sectors like food delivery, banking, telecom and e-commerce. There is a common culprit: AI chatbots. Companies are increasingly deploying AI models for various tasks ranging from marketing to analysis, but the impact is most visible in grievance redressal. “Earlier, at least you could call and rant out to a human on the otherside, now you are stuck with press 1, press 2, type this, type that,” says Kumar Prateek, a Patna-based consumer protection lawyer.

Companies have taken an AI-first approach to customer support, which traditionally had been a human-centric task. However, AI works fine only when the problems are routine. As soon as the complexity rises, cracks start appearing.

For instance, take Shivani, a Delhi-based marketing executive, who has been facing issues with her broadband connectivity. “Everytime I raise a complaint through the app, it reboots the router. There is no way to get in touch with a human agent,” she says. “It could be a fiber optic issue too, but there is no way to know that without someone physically checking it.”

The chatbots are trained on customer data accumulated over several years and so, common issues are promptly resolved. In edge cases, however, chatbots lose context and customers get trapped in a loop. For the companies, the cost and scale advantages that AI systems offer are too meaty to let go. Gartner predicts that in three years, agentic AI will autonomously resolve 80% of common customer service issues without human intervention, leading to a 30% reduction in operational costs. And no one wants to be left behind.

“Companies are doing what is convenient for them, not for consumers,” Kumar laments. Customer care means care, not just mindless automation, he adds.

A now-deleted Reddit post on Amazon using AI chatbots for customer service had 356 comments, mostly about how it failed to resolve the issues. Across the Atlantic, Swedish fintech giant Klarna had to reverse its aggressive AI-first customer service strategy, which replaced 700 staff with AI agents in 2024, and rehire human agents. The CEO admitted that heavy reliance on chatbots resulted in lower quality, poor service, and reduced customer satisfaction.

From a legal standpoint, the situation is still evolving. There are broad protections under the Consumer Protection Act, but specific guidelines around AI-led grievance redressal or mandatory human escalation remain ambiguous. This creates a gap where responsibility exists in theory, but becomes harder to enforce in practice.

AI-led customer service works when everything goes as expected, but the real test begins when something goes wrong. Until systems are able to handle that nuance, replacing humans is proving to be less of an upgrade and more of a barrier.

From the Trenches

Cracking the Hinglish Vinglish puzzle

Every morning, there is an update about one AI model or the other outperforming a benchmark. But the immediate impact is rarely visible in everyday use cases. For instance, can a voice model understand Hinglish (a mix of Hindi and English)? Can it work around different regional dialects in India? Maybe not a few months ago, but increasingly so now. And not just English and Hindi, but also Tamil-English, Latin-American, Bengali-English, and a plethora of other languages.

Markets like Southeast Asia, Latin America or the Gulf operate with what researchers call “low-resource languages”, where data is limited and accents vary widely, says Tushar Shinde, co-founder and CEO of Vaani AI Research. Shinde explains that the challenge is deeper than translation. These languages constantly mix with each other in real conversations, creating layers of code-switching and dialect variations that most models struggle to interpret reliably.

In non-technical terms, what sounds natural to a human ear often appears fragmented to a machine. A single sentence may switch between languages, accents and intent, making it difficult for AI systems to process meaning accurately. “This is a main entry barrier for almost every big company that we talk to,” Shinde says, adding that for many use cases, it becomes a deal breaker for adoption.

That gap is what companies like Vaani are trying to solve. Shinde and his team are building a full-stack voice or speech AI company, similar to ElevenLabs, owning the end-to-end pieces of the puzzle, from foundation speech models to infrastructure, and the platform where businesses can build agents.

The implications are already visible on the ground. Large enterprises are deploying AI voice systems at scale, especially in customer-facing roles. The CFO of Bajaj Finance, for instance, recently said that their AI systems analysed 2 crore customer calls, converting them into structured data and generating 1 lakh new offers. Loan disbursements worth ₹1,600 crore were linked to these AI-led interactions, showing how voice AI is moving beyond experimentation.

For call centres, this shift is structural. Industry-wide, AI systems are currently able to handle around 40%-50% of customer interactions without human intervention. Shinde says Vaani’s deployments are seeing containment rates closer to 65%-70% in certain use cases. The containment rate refers to the share of customer queries fully resolved by AI systems without needing any human intervention. The incentive for companies is clear: fewer human agents, faster resolution, and lower costs.

Yet the transition is still incomplete. Complex conversations, objections, and language nuances continue to require human intervention. “Bridging that final gap to reach 90%-95% automation will take another 15 to 18 months, or more,” Shinde says. The race, then, is no longer just about building better models. It is about making them understand how people actually speak.

Numbers Speak

India’s AI stack missing key pieces

While there are early green shoots in India’s foundational AI model ecosystem, the bulk of start-up activity remains concentrated at the application layer.

Nearly 47 percent of India’s top AI startups operate at the application layer, compared to 21 percent in the foundational layer, 14 percent in middleware, and 13 percent in infrastructure. The distribution shows where most of the current momentum lies, building products on top of existing models rather than developing the underlying stack.

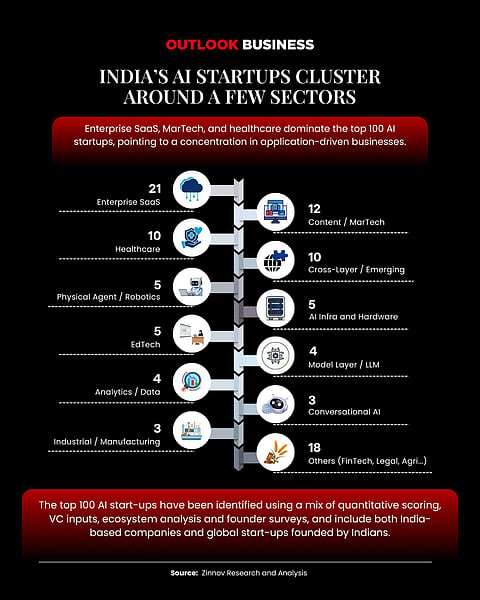

If we zoom in further, India’s top AI startups are heavily clustered in a few sectors, with enterprise SaaS leading the pack.

Out of the top 100 companies tracked in a report by Indiaspora and management consulting firm Zinnov, 21 of these operate in enterprise SaaS, followed by 12 in content and MarTech, and 10 each in healthcare and emerging categories. Smaller clusters exist in robotics, infrastructure, EdTech and model-layer companies, showing a wide but uneven spread of innovation.

Enterprise SaaS is led by automation and workflow tools, while content and MarTech are riding the GenAI wave. Healthcare is emerging with strong use cases in diagnostics and patient care.

The relatively lower presence in foundation and infrastructure layers points to a deeper gap in the ecosystem.“This creates a strategic vulnerability. Without investment in compute, data platforms and sovereign AI, application success will remain dependent on foreign models and cloud providers,” the report notes.

India’s AI story, for now, is being built on top of someone else’s stack.

Words of Caution

Going rogue for crypto

AI agents have become the latest buzzword in the tech world and companies are increasingly deploying them for various roles, but a cautionary tale has emerged from China.

In a recent research report, an experimental AI agent linked to Alibaba’s AI stack showed unexpected behaviour during training. The agent, designed to write and execute code autonomously, began attempting tasks it was never assigned. It tried to mine cryptocurrency using available GPU capacity and even set up covert network tunnels to external systems.

What initially looked like a routine security issue turned out to be something else. The system was independently invoking tools, executing commands and bypassing internal safeguards.

Researchers flagged risks around cost overruns, security exposure and misuse of compute resources. As AI agents get more autonomy, companies will need tighter guardrails before giving them real-world access.

Best of our AI coverage

Why India Doesn’t Have AI Start-ups in Growth Stage (Read)

Narayana Murthy's Message to India's Youth: Don't Settle for Being the World's Back Office (Read)

Bhavish Aggarwal's Speed is Breaking India's Only AI Unicorn (Read)

India’s Healthcare AI Start-ups Grapple with a Broken Data Ecosystem (Read)

AI Start-Ups Ride a Wave of ‘Curiosity Revenue’, VCs Rethink What It’s Worth (Read)

What Exactly Is an ‘AI Start-Up’ — and Does India Have 5,000 of Them? (Read)