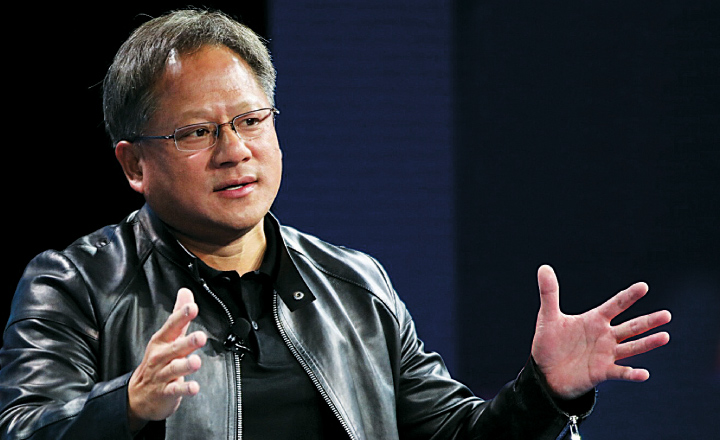

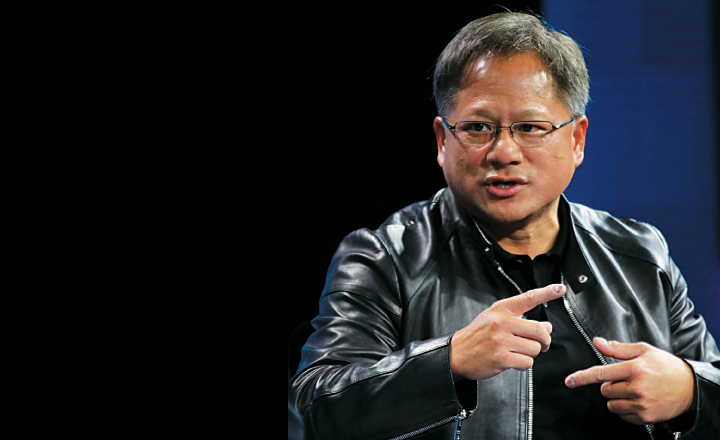

Few areas of technology are attracting as much interest as self-driving cars. The Wall Street Journal’s business editor Jason Anders discussed the heart of that technology with Jen-Hsun Huang, chief executive of Nvidia Corp, whose chips are used to power autonomous driving and other artificial-intelligence applications.

Anders: What can self-driving cars do today that they couldn’t do a year ago?

Huang: Well, the biggest problem is that the car has to be able to perceive the environment. Reasoning, planning and learning are a big part of artificial intelligence. Without being able to perceive the environment accurately and quickly, it’s simply not possible to have an autonomous vehicle interact in the world around us. And so the thing that deep learning did was it made it possible for us to achieve perception at a level that is superhuman.

Anders: Superhuman?

Huang: We can recognize objects better than humans can. The beautiful thing about a car doing perception, using our computers, is that it never gets tired. It has eyes all around the car. It’s never intoxicated. And it’s never angry. It has no emotions. So once you train and test the car, it gets better and better as more and more experiences accumulate.

Anders: But you can’t show the car every possible scenario. So, how do you account for the one-in-a-million thing that’s going to happen?

Anders: But you can’t show the car every possible scenario. So, how do you account for the one-in-a-million thing that’s going to happen?

Huang: I’ll just briefly describe how it works. By looking at what’s going on in the environment, you figure out where you are on an absolute basis, and where you are relative to objects around you. Then you have to predict where everything around you will be in the near future, the cars, pedestrians, motorcycles, bicycles, and where you’re going to be. Based on that prediction, you have to reason about what to do. It could be change lanes, keep going, or drive more cautiously. It could be come to a stop. Then you have to plan the action. Most of the time when we’re driving, we figure out, “Is it safe to drive or not?” Using the inverse logic, “Is it free to drive? Is it safe to drive?”, you could teach the network that type of skill. After that, we could teach the car the driving skills, the behavior of driving itself.

Anders: You take your Tesla to work every day. At what point do you take your hands off the wheel?

Huang: As soon as I get on the highway.

Anders: And you feel completely safe?

Huang: Yeah. I’m paying attention. But even the current-generation Tesla’s doing a fairly good job on highways. But it’s going to do a ton better.

Anders: Tesla announced that all of its coming vehicles are going to have your technology. Is Elon Musk pushing things too fast?

Huang: If you don’t develop the technology and deploy it, it never gets better. At some level, you have to put it on the road. But what’s important is it’s a massive software problem. So companies like Tesla who have a great deal of software capability have an advantage. There’s a rigorous methodology of developing software. The software becomes better and better over use.

Anders: What can’t the cars do today?

Huang: A whole lot of stuff. We’re going to have an AI inside the car that’s going to look around corners. So even if you’re driving, the AI might prevent you from an accident. There’s all kinds of things that the AI could predict on your behalf.

Anders: Can the car be doing too much?

Huang: The thing to realise is the quality of the software improves over time, whereas people’s performance of driving decreases.

Anders: What about at first, when very few cars on the road are driverless?

Huang: Making sure we don’t cause an accident is something we can control, and we ought to do that as quickly as possible. But the cars will learn from every other car’s experience. We’re going to see capabilities of computers grow way faster than at any time in the history of our industry.

Edited excerpts from an interview at The Wall Street Journal's WSJDLive 2016 global technology conference.