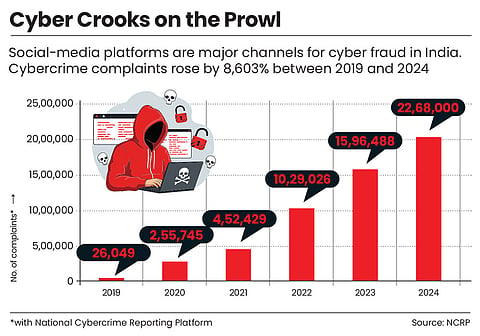

Seema (name changed), a 34-year-old housewife from Darjeeling in West Bengal, was scrolling on Instagram when she came across a profile named “Investor Alliance”, which promoted a scheme promising high returns and stock trading opportunities.

Riots, Frauds and Threats to Sovereignty: The Dark Side of Safe Harbour to Big Tech in India

The safe-harbour provision gives Big Tech the leeway to ignore misinformation on their social-media platforms for profits. India must axe this legal shield

She followed a link to join a WhatsApp group offering stock-market training and began to learn the basics of trading. Finally, she felt she could earn some much-needed money to complete the long-pending construction at her house.

She invested around ₹1.5 lakh in a company named “Skyrim”. The returns shown to her appeared lucrative at 20X. But when she tried to withdraw her money, she was asked to pay an additional ₹1 lakh as “charges”.

After discussing it with her husband, she realised it might be fraudulent. When she demanded her money back, the fraudsters stopped responding. Soon after, she was removed from the group.

She filed a first information report (FIR) against unknown persons, joining countless others who have taken similar steps with little hope of recovering their money.

Thousands of kilometres away in Nagpur in 2024, tensions were already high between Hindu and Muslim communities following protests demanding the removal of Mughal emperor Aurangzeb’s tomb in the city’s Chhatrapati Sambhajinagar locality.

Amid this charged atmosphere, a post began circulating on social media that a chadar (cloth) bearing Quranic verses had been burnt. The claim spread rapidly and it wasn’t long before the situation became violent. Mobs hurled stones at police, attacked homes and set vehicles on fire. More than 30 police personnel were injured.

Later, investigations found that the viral post about chadar was fabricated. But an eerie feeling of tension still hangs over the town.

Safe harbour is now a topic of debate across the world, especially in Brazil and the US. But India is the most vulnerable to misinformation and this legal shield makes it harder to stem the problem

However, for 48-year-old Jyoti (name changed) social media perhaps was more dangerous. For the homemaker from Siliguri in West Bengal, a “doctor” on YouTube wasn’t just a content creator, but a lifeline. With over 90,000 subscribers, his channel promised a miracle: a 72-hour “cure” for diabetes.

She took the leap of faith. She stopped her medication and put away her glucometer. Six months later, she was in hospital, her blood sugar at a dangerous 400 mg/dL.

These incidents lay bare the symptoms of a much larger problem taking root in the world of internet.

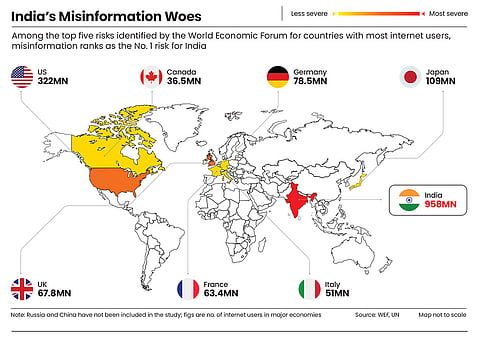

Today, India has around 958mn internet users, nearly three times the size of the US’ 322mn. Matching India’s scale would require combining the internet populations of several advanced economies, including the US, the UK, Germany and France.

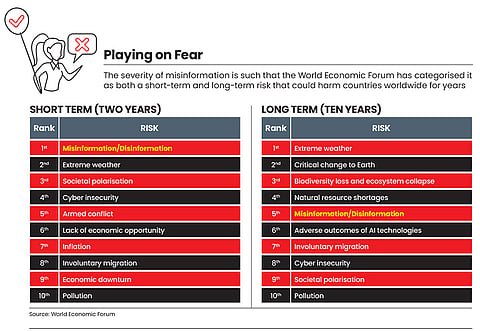

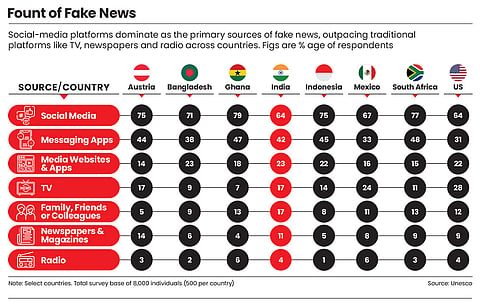

With this scale comes misinformation, which is steadily extracting a social, economic and political toll on the country. For the second consecutive year, the World Economic Forum’s Global Risks Report has ranked misinformation as the most immediate threat to India, placing it above risks such as extreme weather and economic inequality.

The question is: if misinformation is spreading like wildfire, why don’t authorities stop it? Why can’t those pages simply be blocked?

And behind this digital crisis, there are visible architects: social-media platforms run by Big Tech. Their business models have been seen treating chaos as a commodity.

If a physical product had caused damage, it would be pulled off the shelves and its makers sued into oblivion. So, why can’t Big Tech companies that provide platforms to create and share such content be held accountable?

It is because these giants have an immunity shield. In India, a safe-harbour provision under Section 79 of the Information Technology Act, 2000, treats them as intermediaries that host user-generated content rather than publishers responsible for content on their platforms.

“Safe harbour was framed at a time when this technology was new and regulations had not evolved. But today, the scale and impact of these platforms are massive,” says Ashwani Mahajan, an economist and the current national co-convener of Swadeshi Jagaran Manch, a political and cultural organisation.

This shield is not unique to India. This provision has become a topic of debate across the world, especially in Brazil and the US. But India is the most vulnerable to misinformation and this legal shield makes it that much harder to stem the problem.

The Leviathan Shield

When 20-year-old Sam (name changed) read about the Covid-19 pandemic for the first time, he got pulled into multiple conspiracy theories online. Soon, he was in a rabbit hole. He was convinced that the virus was manufactured by the government in a lab, vaccines were unsafe, perhaps designed to kill. It didn’t end there.

He spiralled further into the limitless world of social media and its endless conspiracy loop. Over time, Sam’s friends and even his girlfriend deserted him. How did misinformation tear apart years of relationship?

Former Meta (Facebook’s parent company) executive-turned-whistleblower Frances Haugen had some of these answers about how people lose touch with reality on social media when she released internal memos and documents in 2021.

It is not that Big tech doesn’t have the tools to stop misinformation. During the 2020 US presidential elections, Facebook temporarily cooled the news feed to deprioritise inflammatory political content, Haugen told The Guardian. But as soon as voting concluded, the “safety” mode was off.

The reason? Growth. “Facebook has realised that if they change the algorithm to be safer, people will spend less time on the site... and [Facebook] will make less money,” Haugen told CBS’ 60 Minutes in 2021.

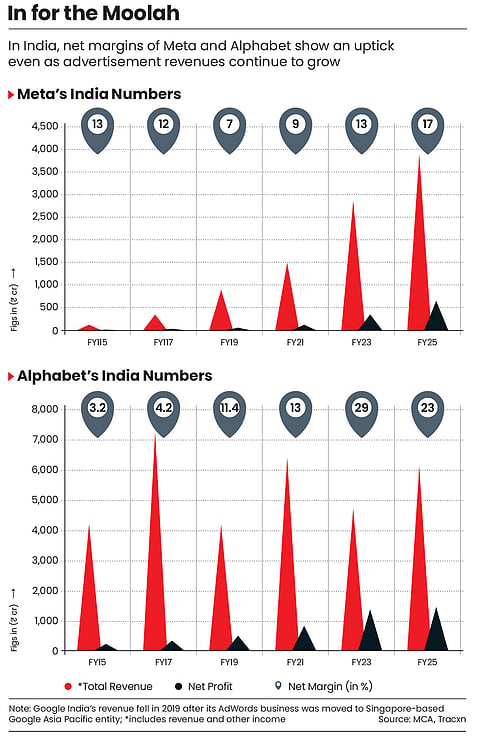

Today, Big Tech earns the majority of its revenue from advertisements. In 2024–25, Alphabet (Google’s parent company) reported $402.8bn in revenue with over 70% coming from advertising, while Meta generated $200.97bn, of which 98% was ad-driven. They are therefore incentivised to keep users on the platform because that is the only way users see ads and click on them.

This business model is their golden goose. To protect it, these companies rely heavily on safe harbour, which ensures they aren’t held liable for what users post.

“If companies were fully liable for all content, the cost of monitoring and moderating content at scale could become extremely high,” says Margarida Silva, a researcher on Big Tech lobbying at the Netherlands-based think tank SOMO.

“Protecting their business model and profitability is part of the broader picture. Safe-harbour provisions allow platforms to host large volumes of user-generated content without being legally responsible,” she adds.

When this shield is threatened, Big Tech firms don’t hesitate to flex their financial muscles. In the US alone, Meta, Alphabet and TikTok spent over $51mn on lobbying in 2025. Meta has publicly disclosed Section 230 (safe-harbour law in the USA) as one of its lobbying priorities.

These tactics are applied globally. Take Brazil for example. When a 2023 Bill threatened to make social-media platforms liable for third-party content and misleading ads, Alphabet spent $350,000 on a media blitz, as per a report in Publica, calling the proposal “a serious threat to freedom of expression”. Other platforms followed, and the Bill was stalled.

About a year later, a US child-safety Bill advanced in Congress but stalled in the House. Around the same time, Meta announced a $10bn data centre in Louisiana, the home state of Republican leaders Mike Johnson (House Speaker) and Steve Scalise (House majority leader), who controlled the fate of the Bill.

Industry insiders concur that similar patterns play out in India. Attempts by Big Tech to dilute India’s digital laws are not new, Justice Bellur Narayanaswamy Srikrishna, a retired Supreme Court justice, tells Outlook Business.

But lobbying is just a small part of a bigger problem in India. When it comes to prioritising online safety, these platforms often treat markets like India as secondary.

“I became concerned with India even in the first two weeks I was in the company,” Haugen told Time in November 2021.

Documents leaked by her reveal a stark disparity: 87% of Facebook’s global budget spent on classifying misinformation is allocated to the US, leaving just 13% for the rest of the world. For context, North American users are only 10% of its daily users.

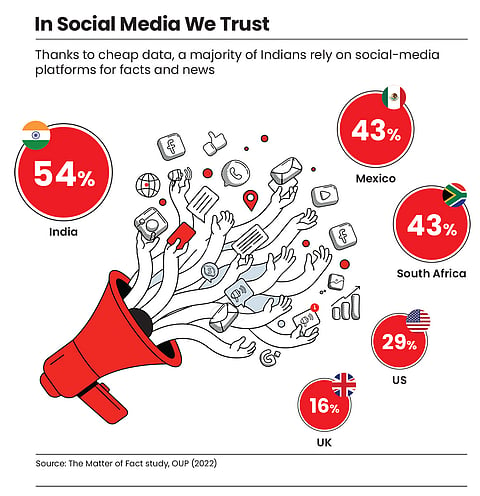

India, with its 22 official languages and high-risk misinformation landscape, remains largely under-monitored. This is despite Meta identifying the country as one of the most “at-risk” markets globally due to the volume of misinformation, according to internal reports seen by the Associated Press in 2021.

For Big Tech platforms, India is a market that delivers scale, not commensurate revenue. The gap in average revenue per user (ARPU) explains why. A user in the US or Canada is worth $50 to Meta; a user in India is worth just $3.

The same pattern is visible at Alphabet, which earned about $170bn from the US in 2024, compared with roughly $640mn reported by its India subsidiary, despite the latter having a far larger user base than the roughly 320mn users in the US.

“Markets like India are treated as ‘scale markets’. Safety becomes reactive rather than foundational. If something explodes into a headline... resources surge temporarily,” Kelly Stonelake, former Meta executive turned whistleblower, tells Outlook Business.

In effect, markets like India help these platforms drive engagement and inflate global user metrics that appeal to investors.

“Because when you’re a global company, you can show 4bn active users to the public market and they’ll give you a better market cap for it. Your ARPU comes from America, but you get your DAU [daily active user] from India. When you join the numbers, they look nice,” Cred founder Kunal Shah told news website Rest of the World in 2020.

And while Big Tech is too busy adding to its billions of dollars, a hidden tax is wreaking havoc on society.

Mounting Toll

In early 2023, the streets of Manipur were already burning due to the ethnic clashes between the Kuki and Meitei communities. The situation was further aggravated by amplified misinformation online. A picture circulated widely on social-media platforms, claiming it was the dead body of a Meitei woman, who had been sexually assaulted and killed by members of the Kuki tribe during the ongoing violence.

The image was later found to be of a woman’s body in Delhi.

According to a report by the Australian Strategic Policy Institute, a think tank, Chinese-linked social-media accounts were being used to amplify tensions in the region. These accounts used phrases such as “there is a little China in India that does not speak Hindi and refuses to marry Indians” to deepen the communal divide, the report said.

Misinformation has reared its ugly head in the past too. In 2012, there was a sudden exodus of Northeastern people from different parts of India to their home states. The trigger was rumours on social-media platforms that Muslims were planning attacks on Northeastern communities after Eid, in response to ethnic clashes between Bodos and Bengali-speaking Muslims in Assam’s Kokrajhar.

Doctored images and videos showing violence against minorities in Assam were widely circulated. The message: “Get out before Ramzan. After Ramzan, there will be violence.”

Cities across India witnessed the debilitating impact of this misinformation. From Bengaluru alone, an estimated 40,000 people fled to their home states within a few days.

Railway stations became sites of desperate congestion as special trains were deployed to ferry panicked students and workers back to the Northeast. Those with no means to leave remained gripped by fear.

Investigations later revealed a coordinated campaign. RK Singh, then Union home secretary noted that 76 websites were identified as primary sources of these morphed images, with a significant bulk of uploads traced back to Pakistan.

These events demonstrate how user engagement is prioritised over accuracy by Big Tech, leaving society’s most vulnerable to deal with the consequences. Societal and political wounds through inflammatory social-media posts bleed into a country’s economy too.

Indian economy, which is often described as capital-scarce by economists, needs sustained inflows of foreign investment to fuel its Viksit Bharat ambition. But the chaos engineered on social-media platforms threatens to stall any momentum. “It may influence the perception of global investors, who may lose confidence in India. This might also impact our global ratings. In that sense, misinformation acts like a hidden tax on development,” argues Mahajan.

Global rating agencies like S&P, Moody’s and Fitch do not just assess economic indicators; political stability and the risk of social unrest are also factored into sovereign ratings.

“An analysis of 38 episodes of sovereign ‘rating crises’ over 1997–2017, in which there were at least three notches of downgrades within three calendar years, finds that political and governance issues were the trigger for or main factor in 21% of cases,” according to a study by Fitch.

That’s not the entirety of the misinformation’s harm to the economy. India’s home-grown start-ups are also paying this hidden tax of misinformation.

For the founders of agri-consumer brand Eggoz, December 2025 was supposed to be a festive growth period. Instead, it turned into a month of crisis after an influencer posted a video alleging that the brand’s eggs caused cancer.

The impact was immediate and severe. The Jammu and Kashmir government halted shipments, while investigators in Karnataka launched raids. Eggs were removed from school mid-day meal programmes, depriving children of essential nutrition based on a false claim.

By the time India’s food regulator, the Food Safety and Standards Authority of India, issued a clarification debunking the allegations, the damage was done.

“More than 500 videos were posted by influencers,” recalls a source close to the founders. “You had hundreds of voices spreading a claim, while the company could issue only a limited number of clarifications.”

In Mumbai, Kiran Shah, founder of healthy ice-cream brand Go Zero, faced a similar digital backlash. A viral video claimed that his “zero sugar” products contained “300% more sugar”, citing a lab report.

Shah’s inbox received 200–300 messages within a week. “People were genuinely scared,” he says. “Some even asked if it was safe to consume the product during pregnancy.”

Even after the claims were proven misleading, the economic impact lingered, with a 15% drop in sales during a peak period. “The healthy ice-cream category shrank. Search volumes dropped,” he adds.

The irony is that many start-ups spend lakhs of rupees every month on digital advertising to build their presence on social media. Despite spending big bucks, small companies feel they are given the short shift. The inside joke in the ecosystem is that start-ups are just an intermediary for venture-capital money to flow into balance sheets of Alphabet and Meta.

Anupam Mittal, founder of matrimonial website Shaadi.com, didn’t mince words in a LinkedIn post: “Big Tech has normalised this behaviour by monetising brand keywords and disintermediating the very companies that built demand in the first place.”

When misinformation campaigns happen against smaller companies, they can deal a lasting blow. Veteran industry executive Lloyd Mathias says, “For them, damage can be significant because they often lack large public-relations budgets or crisis-management teams.”

Cambrian Explosion of AI

Today, a new and more lethal weapon has entered the misinformation fray: artificial intelligence (AI).

“You don’t need technical skills anymore,” warns Divyendra Singh Jadoun, founder of Polymath Synthetic Media Solutions. “There are open-source tools where users simply type prompts and generate content.”

Twenty-year-old Rishika (name changed) woke up one day to this new threat. While scrolling Instagram, she received a message from an unknown account. One click and her world collapsed.

“I puked after seeing it,” she says, the memory still a physical blow. A man whose advances she had declined had morphed her face and those of her friends onto explicit content using AI deepfake software.

Before AI burst onto the scene, creating a high-quality deepfake was a 15-day marathon requiring at least 30 minutes of high-definition footage of a target. It was a barrier that kept most casual trolls at bay.

Today, it takes under 60 seconds to create a convincing deepfake video with a single image. The barrier to entry for digital sabotage hasn’t just lowered, it has vanished.

Those like Rishika, who have suffered at the hands of AI, have a valid question: what is the guarantee that it won’t happen again? Numbers validate her fear.

Deepfake content pieces surged from around 500,000 in 2023 to nearly 8mn in 2025, as per the Entrust Cybersecurity Institute.

Of course, social-media platforms amplify the risk as they enable these deepfakes to spread at scale. And even the highest office isn’t safe.

When a deepfake video emerged showing President Droupadi Murmu offering dubious financial advice, police officer Fakkeerappa Kaginelli reported it to Facebook, expecting swift action. The response: “The content did not violate our policies.”

The video stayed up. Under “safe harbour”, the platform remained untouchable. It took a public uproar from media and policy groups to finally force a takedown. This delay in the lightning-fast world of viral misinformation is an eternity.

Before the latest amendment, India’s IT rules required platforms to resolve specific complaints within 72 hours. “There are certain platforms that take days to remove content,” says Dewan, the ACP.

“They have not invested enough in law-enforcement networks that enable content blocking and reporting,” he adds.

India faced its most visceral shock yet last year, when the very tools marketed as “cutting-edge” became instruments of systemic harassment. At the heart of the storm was Grok, the AI chatbot integrated into X, owned by world’s richest tech billionaire Elon Musk.

Grok introduced a feature that allowed users to generate sexualised images of real people by modifying their photos. With a few keystrokes, users were participating in a viral trend: “Put her in a bikini.” The feature was quickly weaponised to “undress” women and children.

Things quickly went out of hand. The Indian government stepped in and warned X that it could lose its safe-harbour protection if it failed to protect users.

“There is no reason our children should be exposed online to what is legally forbidden in the real world,” French President Emmanuel Macron said recently, championing a social-media ban for children under 15.

In fact, several countries like Australia, Spain, Malaysia and Norway, have either implemented or are planning social-media bans for kids. Even in India, states like Karnataka, Andhra Pradesh and Goa are pitching for a total ban for kids. However, recent reports say the Centre is in favour of a more nuanced approach.

“Just a ban won’t make any difference. If platforms can still hide behind safe harbour, they will continue to avoid responsibility,” argues Ellen Roome, who lost her 12-year-old son to suicide following a TikTok challenge. However, she also argues that a social media ban is needed for children.

“It’s about time countries caught up and ensured platforms are held accountable and made safe for both children and adults,” she adds.

Facade of Self-Regulation

But the larger issue is that the infrastructure to protect users remains skeletal at these giant corporations. When talks of content moderation and verification arise, they champion “self-regulation” as a remedy. But this has never worked.

The year was 2020 and India was a nation under siege. The Covid-19 pandemic had locked down its cities and stretched its hospitals to the breaking point.

Then came the second blow: the freezing heights of Ladakh turned into a battlefield. In a skirmish with Chinese troops, the first fatal clash in 45 years along the Line of Actual Control (LAC), 20 Indian soldiers died and over 70 were injured.

While the nation mourned its martyrs, Twitter’s (now X) geo-tagging software displayed Leh, joint capital of Ladakh, as part of China.

This came at a time when New Delhi was trying to de-escalate the situation through delicate dialogue with Beijing. Despite urgent notices, the correction took days and required “repeated reminders”.

Again, in June 2021, Twitter’s website featured a wrong map of India, this time showing Jammu and Kashmir, and Ladakh as an entirely separate country. This set the stage for one of the most explosive confrontations in the history of the Indian internet. The battleground was the IT Rules 2021.

India’s demand was simple: if you operate in India, you must be accountable to India. The rules mandated the appointment of a local grievance officer, a human being on Indian soil who could be held responsible.

This was also the time when the platform expressed concern about the new IT Rules 2021, describing them as a “potential threat to freedom of expression”. What followed was one of the angriest confrontations between the government and a social-media platform.

The government’s retort was scathing: “If Twitter is so committed, then why did it not set up such a mechanism in India on its own? Twitter representatives in India routinely claim that they have no authority and that they and the people of India need to escalate everything to Twitter headquarters in the US.”

In 2021, another social-media giant went up in arms against the IT Rules. WhatsApp sued the Indian government over the new amendments that required “significant social-media intermediaries” to trace the origin of messages. It argued that the new requirement would shatter user privacy.

The government’s pushback was rooted in the sheer volume of citizen suffering. Officials cited lakhs of complaints from ordinary Indians whose lives had been upended by viral lies, which were not addressed by these platforms.

“That is not acceptable,” declared Rajeev Chandrasekhar, the then IT minister, who set the cat among the pigeons when he suggested that safe harbour needs to be re-examined.

“I opposed it then and I oppose it for the same reason: I oppose clean immunity and a safe harbour without any conditions for platforms,” he told The Indian Express in 2023.

This conviction birthed the idea of a new legislation in 2023 that was designed to replace the two-decade-old IT Act. The new law would have flipped the script: safe harbour would become the exception, not the rule. Platforms would be categorised by their function, be it social media or e-commerce, and each would face a specific set of rules tailored to the risks they posed to Indian citizens.

However, not long after this, Chandrasekhar ceased to be a minister the new law never came into being.

As for Big Tech platforms, the “self-regulation” banner allows them to design their own rules and dodge independent oversight. These platforms have also avoided sharing concrete figures on how much they actually spend to curb misinformation.

One rare disclosure came during a January 2024 US Senate hearing, when Meta said it had spent about $5bn on safety and security in 2023, around 3.7% of its revenue. In the same year, Meta’s official documents, seen by Reuters, projected that it generated $16bn from running advertising for scams and banned goods. Meta, however, denied the claim and told Reuters that the documents “present a selective view that distorts the company’s approach to fraud and scams”.

Meta restricted more than 28,000 pieces of content in India between January and June 2025, while Alphabet was asked to remove over 53,000 items. X received 29,118 govt takedown requests during the same period

Alphabet told a US House panel in 2019 that it spends “hundreds of millions of dollars” annually on content review. But the exact figure remains unknown.

While these figures look promising on paper, they are insignificant compared to the hundreds of billions of dollars of revenue these companies rake in each year.

In comparison, industries like pharmaceuticals have non-negotiable safeguards. Developing a new drug typically takes over a decade and costs upwards of $2.6bn. Even then only about one in 10 drugs after rigorous clinical trials ultimately makes it to market. Meanwhile, industry estimates suggest that the cost of clinical trials can go as high as 85% of drug development costs.

Whereas Big Tech appears to be moving in the opposite direction.

When Musk took over X, teams focused on moderation and platform safety were let go in a mass company-wide layoff.

Meta’s boss Mark Zuckerberg has said that the company is shifting back to its “free expression” roots, scaling back fact-checking efforts in the US.

In India, the retreat has been even more brazen: Meta slashed fees for professional fact-checkers by a staggering 50%, according to a report by The Hindu.

In January 2022, 80 global fact-checking organisations wrote an open letter to Google, calling its platform YouTube a “major conduit” for disinformation. But there was no response. “Despite writing open letters about controlling YouTube or allowing fact-checkers to fact-check content. Google refuses to take action,” lamented a fact-checker who did not wish to be named.

Responding to a query from Outlook Business on the allegations, Meta said, “We take a comprehensive and evolving approach to tackling misinformation across our platforms.” Alphabet and X didn’t respond to queries at the time of going to press.

These companies argue that verifying information at scale is impossible, but they reveal the flaw in their own systems. Platforms that can micro target ads, track user behaviour across devices and optimise engagement in real time cannot claim that basic compliance with national law is beyond their technical capacity.

“These are the richest companies in the world, and they have the best engineers in the world. They could fix these problems if they wanted to,” said British actor and activist Sacha Baron Cohen in 2019.

“The truth is, these companies won’t fundamentally change because their entire business model relies on generating more engagement, and nothing generates more engagement than lies, fear and outrage,” he added.

However, the tide seems to be turning as political leaders across the world, including the US, appear increasingly tired of the self-regulation model. At a US Senate hearing in March 2021, lawmakers told major technology companies that the “time for self-regulation is over”.

The “safe-harbour” protection that once made these platforms untouchable are now being viewed as shields for foreign interference, data profiling and even sex trafficking.

“Enough is enough,” declared US Senator Dick Durbin in December last year. “Sunsetting Section 230 will force Big Tech to take ownership over the harms it has wrought,” he said.

In India, the government recently tightened its IT rules.

The new mandate? Social media intermediaries must remove non-consensual intimate imagery and deepfakes within just two hours of a complaint, obliterating the previous 24-hour grace period.

Earlier this year, Ashwini Vaishnaw, Union IT minister said: “If someone has any problem, they should come and meet me. What is illegal offline is illegal online.”

The Only Option

Every time there is a talk about removing safe harbour, Big Tech has always advanced its favourite argument: free speech.

In US Senate hearings, the big suits from these companies warn that removing their immunity will lead to government censorship and the death of expression.

At least in India, the government already exercises its power to ask platforms to remove flagged unlawful content. The curb on unrestrained speech is, in many ways, already happening.

In fact, government URL blocking in India has surged sharply in recent years, rising from around 2,799 URLs in 2018 to 28,000 in 2024—a nearly tenfold increase. Meanwhile, platforms reported more than 1 lakh content removal requests in the first half of 2025.

Meta alone restricted more than 28,000 pieces of content in India between January and June 2025, while Alphabet was asked to remove over 53,000 items across its products and services. X received 29,118 government takedown requests during the same period.

But these takedown requests are often related to posts that are critical or satirical of the government, or those that may harm national interest, or content linked to misinformation around national security.

What about people who fall victim to financial fraud or a patient who avoids seeking treatment after being misled by a social-media post?

“Safe harbour cannot become a permanent shield that allows companies to grow profits while avoiding accountability,” says Mahajan.

Let’s look at British colonial rule as an example. When apologists look back, they often point out railways as a magnificent gift to India. But the truth is that the railways were built with Indian taxes to serve British interests, designed to transport extracted resources to ports.

Today, Big Tech screams about such a gift. They talk about how social-media platforms are connecting the world and promoting dialogue. But are they truly connecting us or simply laying the tracks for a new kind of exploitation?

Big Tech’s “neutral intermediary” shield is much like the kavach worn by Karna in Mahabharata, making him invincible. While the Indian government talks about reconsidering or revising safe harbour, a firm legislative plan is missing.

Thanks to safe harbour, people like Seema who fall victim to misinformation online can’t drag the social-media platforms to court. Until citizens get the right to sue these platforms responsible for their grief, the digital India dream is shattered daily by misinformation and deepfakes.

The safe-harbour provision, once meant to nurture a young internet, now shields these Big Tech social-media giants from the consequences of toxic algorithms and harms. To protect our digital future, this shield must be removed.